How to Add API Keys to Your SaaS with Convex

Just a guy who loves to write code and watch anime.

Introduction

If you want to let users call your service from their own app. This is very possible.

The hard part is not generating a key. The hard part is doing the full system well. Secure storage. One time key reveal. Auth. Idempotency. Queueing. Polling. Limits. Analytics. Revocation.

This post walks through it in chronological order. The examples use Convex and TypeScript.

What we are building

The product shape looks like this.

A user opens your app and creates an API key.

Your server generates the full key.

Your database stores only metadata and a hash.

The full key is shown once.

The client calls your API with

Authorization: Bearer ....Your backend authenticates the key.

The backend creates one logical request with an idempotency key.

The work runs through an async queue.

The client polls request status.

The client fetches the result when it is ready.

This is a strong shape for any product where the work takes time. AI generation. Video processing. Data pipelines. Anything where you do not want to hold an HTTP connection open for thirty seconds.

Step 1. Decide what owns the keys

In most products the account owns the keys. Not one browser session. Not one project. Not one device.

This is usually the best launch model. Billing is account level. Analytics are account level. Queueing is account level. One user can create many keys for prod. staging. CI. local dev.

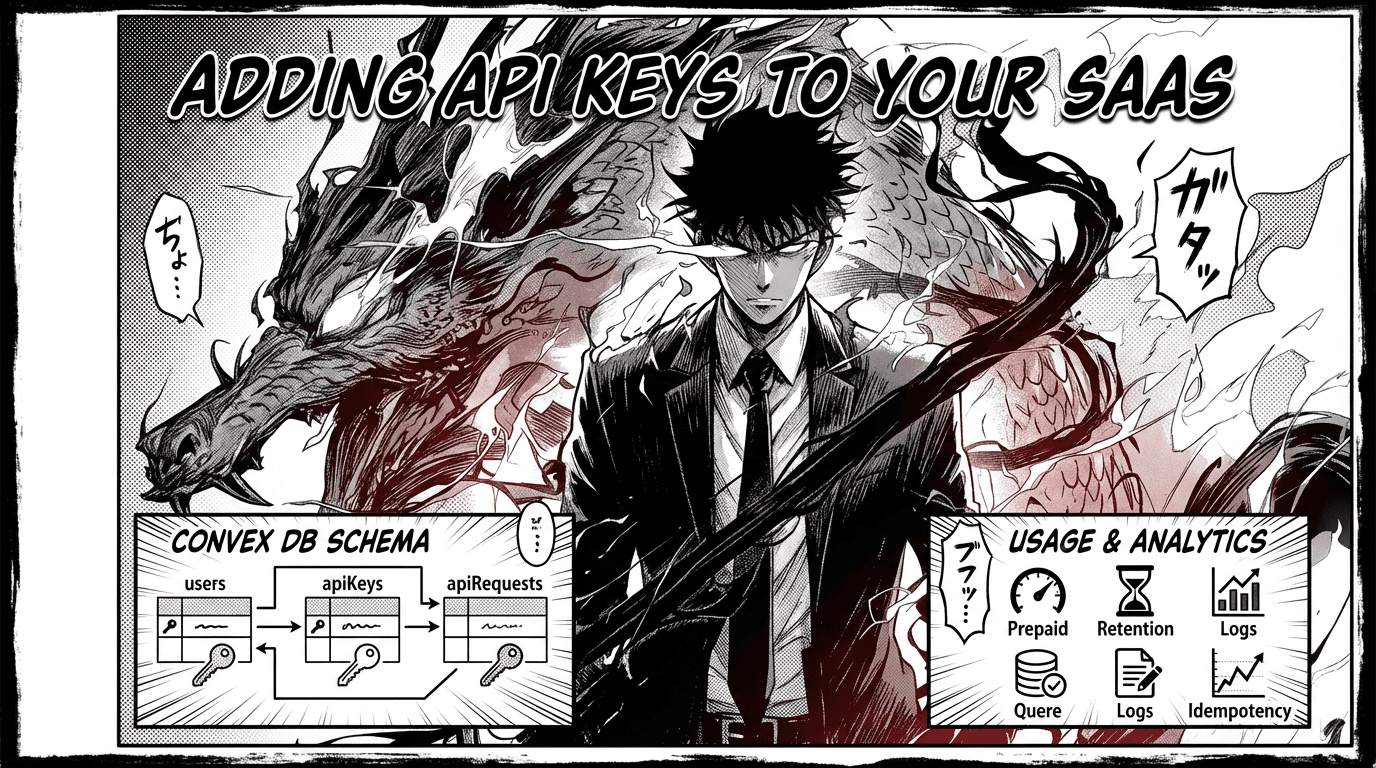

Step 2. Add the core tables

You need three tables. users for account level usage. apiKeys for key metadata and hashes. apiRequests for one row per logical request. That last one is important. One row per logical request. Not one row per retry.

// convex/schema.ts

import { defineSchema, defineTable } from "convex/server";

import { v } from "convex/values";

export default defineSchema({

// ── Account level data ──

users: defineTable({

email: v.optional(v.string()),

name: v.optional(v.string()),

// how many API requests this account can still make

apiGenerationsRemaining: v.optional(v.float64()),

// how many requests are currently reserved for this account

// queued plus processing

// used for prepaid capacity checks and queue state

apiReservedRequestCount: v.optional(v.float64()),

}),

// ── API key metadata ──

// we never store the raw secret here. only a hash.

apiKeys: defineTable({

userId: v.id("users"), // which account owns this key

name: v.string(), // human label like "production" or "CI"

keyId: v.string(), // short public id. used to look up the key fast

secretHash: v.string(), // sha256(pepper + secret). the only secret we store

prefix: v.string(), // "sk_live_ab12cd34". safe to show in UI

last4: v.string(), // last 4 chars of the secret. helps users identify keys

createdAt: v.float64(),

lastUsedAt: v.optional(v.float64()), // updated on each authenticated request

revokedAt: v.optional(v.float64()), // set when key is revoked. null means active

})

.index("by_user", ["userId", "createdAt"]) // list all keys for an account

.index("by_key_id", ["keyId"]), // fast lookup during auth

// ── One row per logical request ──

// this row is the queue item. the idempotency record. and the analytics record.

// one row per logical request. not one row per retry.

// that is why this design is clean. one table does three jobs.

apiRequests: defineTable({

userId: v.id("users"), // which account made this request

apiKeyId: v.id("apiKeys"), // which key was used. useful for per key analytics

requestId: v.string(), // public id returned to the client for polling

idempotencyKey: v.string(), // client provided. prevents duplicate work on retry

requestBodyHash: v.string(), // hash of the request body. used to detect conflicts

// same idempotency key + different body = 409

input: v.string(), // the full request payload

inputPreview: v.string(), // truncated version for dashboard lists and logs

// queue state machine

status: v.union(

v.literal("queued"), // accepted but not started yet

v.literal("processing"), // picked up by the dispatcher

v.literal("completed"), // finished successfully

v.literal("failed"), // something went wrong

),

// error details. only populated on failure

statusCode: v.optional(v.float64()),

errorCode: v.optional(v.string()),

errorMessage: v.optional(v.string()),

// how long the actual work took in milliseconds

latencyMs: v.optional(v.float64()),

// where the output file lives in Convex storage

resultStorageId: v.optional(v.id("_storage")),

// when the output file should be deleted

storedUntil: v.optional(v.float64()),

// set after the file is actually removed

outputExpiredAt: v.optional(v.float64()),

// timestamps for the request lifecycle

startedAt: v.optional(v.float64()), // when processing began

completedAt: v.optional(v.float64()), // when processing finished

createdAt: v.float64(), // when the request was first accepted

})

// find existing request for idempotency check

.index("by_user_and_idempotency_key", ["userId", "idempotencyKey"])

// fast lookup for the polling endpoint

.index("by_request_id", ["requestId"])

// find queued or processing requests for the dispatcher

.index("by_user_and_status_and_created_at", [

"userId",

"status",

"createdAt",

])

// list all requests for an account. sorted by time

.index("by_user_and_created_at", ["userId", "createdAt"]),

});

Step 3. Generate keys on the server

Do not let the client generate production API keys. Generate them on the server.

A good key has three parts. A fixed public prefix. A short key id. A long random secret.

sk_live_ab12cd34_xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

The prefix makes it easy to identify in logs and support. The key id lets you index quickly. The secret is the real auth.

// convex/shared/apiKeys.ts

import { createHash, randomBytes } from "node:crypto";

const API_KEY_PREFIX = "sk_live";

export function createApiKeyId() {

return randomBytes(4).toString("hex");

}

export function createApiKeySecret() {

return randomBytes(32).toString("base64url");

}

export function createFullApiKey() {

const keyId = createApiKeyId();

const secret = createApiKeySecret();

return {

keyId,

secret,

token: `\({API_KEY_PREFIX}_\){keyId}_${secret}`,

prefix: `\({API_KEY_PREFIX}_\){keyId}`,

last4: secret.slice(-4),

};

}

export async function hashApiKeySecret(args: {

pepper: string;

secret: string;

}) {

return createHash("sha256")

.update(`\({args.pepper}:\){args.secret}`)

.digest("hex");

}

export function parseApiKeyToken(token: string) {

const match = token.match(/^sk_live_([a-z0-9]+)_([A-Za-z0-9_-]+)$/);

if (!match) {

return null;

}

return {

keyId: match[1],

secret: match[2],

};

}

export function hashRequestBody(body: unknown) {

return createHash("sha256").update(JSON.stringify(body)).digest("hex");

}

Step 4. Use a pepper env var

This is one of the most important parts.

Do not store the raw secret. Store a hash of the secret. And hash it with a server only pepper.

API_KEY_PEPPER=super-long-random-secret

Generate it in your terminal with openssl.

openssl rand -base64 32

If your database leaks. attackers do not get raw API keys. They cannot copy the stored hash into a request. Your server still controls the real trust boundary.

Keep the pepper only on the server. Never log it. Never send it to the browser.

Step 5. Build key creation

This flow should be boring. Boring is good.

User enters a name. Server generates the key. Server stores only metadata and hash. UI shows the full token once. UI tells the user it cannot be viewed again.

// convex/developerApi/mutations.ts

import { v } from "convex/values";

import { mutation } from "../_generated/server";

import { createFullApiKey, hashApiKeySecret } from "../shared/apiKeys";

export const createApiKey = mutation({

args: {

name: v.string(),

},

handler: async (ctx, args) => {

const identity = await ctx.auth.getUserIdentity();

if (!identity) {

throw new Error("Unauthorized");

}

const user = await ctx.db

.query("users")

.withIndex("by_email", (q) => q.eq("email", identity.email!))

.first();

if (!user) {

throw new Error("User not found");

}

const pepper = process.env.API_KEY_PEPPER;

if (!pepper) {

throw new Error("API_KEY_PEPPER is missing");

}

const key = createFullApiKey();

const secretHash = await hashApiKeySecret({

pepper,

secret: key.secret,

});

await ctx.db.insert("apiKeys", {

userId: user._id,

name: args.name.trim(),

keyId: key.keyId,

secretHash,

prefix: key.prefix,

last4: key.last4,

createdAt: Date.now(),

});

return {

name: args.name.trim(),

token: key.token,

prefix: key.prefix,

last4: key.last4,

createdAt: Date.now(),

};

},

});

Show token once. Later queries should only return name. prefix. last4. timestamps.

Step 6. Authenticate requests with the key

Use the Authorization header.

Authorization: Bearer sk_live_ab12cd34_xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

// inside a Convex HTTP action helper

import { internal } from "./_generated/api";

import { parseApiKeyToken, hashApiKeySecret } from "./shared/apiKeys";

export async function authenticateApiKeyRequest(ctx: any, request: Request) {

const authHeader = request.headers.get("Authorization");

const token = authHeader?.replace(/^Bearer\s+/, "");

if (!token) {

return null;

}

const parsed = parseApiKeyToken(token);

if (!parsed) {

return null;

}

const pepper = process.env.API_KEY_PEPPER;

if (!pepper) {

throw new Error("API_KEY_PEPPER is missing");

}

const secretHash = await hashApiKeySecret({

pepper,

secret: parsed.secret,

});

const authResult = await ctx.runMutation(

internal.publicApi.mutations.authenticateApiKey,

{

keyId: parsed.keyId,

secretHash,

},

);

if (authResult.kind !== "authenticated") {

return null;

}

return authResult;

}

Auth should reject invalid keys. revoked keys. malformed tokens. And it should never log raw keys.

Step 7. Add idempotency before you add real usage

This is where many APIs get weak.

If a client times out and retries. you do not want to create a second expensive operation. You need an idempotency key.

Idempotency-Key: 9e5a61e2-4e7c-4f7d-a984-5d1ce4c6d1a2

The rule is simple. One idempotency key means one logical request. Same key plus same body gives the same logical request. Same key plus different body gives 409. Retries are safe. Billing is safe.

export function evaluateExistingRequest(args: {

existingRequest: {

requestId: string;

requestBodyHash: string;

status: "queued" | "processing" | "completed" | "failed";

} | null;

requestBodyHash: string;

}) {

if (!args.existingRequest) {

return { kind: "create_new" as const };

}

if (args.existingRequest.requestBodyHash !== args.requestBodyHash) {

return {

kind: "idempotency_conflict" as const,

requestId: args.existingRequest.requestId,

};

}

return {

kind: "reuse_existing" as const,

requestId: args.existingRequest.requestId,

status: args.existingRequest.status,

};

}

This one idea saves a lot of pain later.

Step 8. Choose sync or async

If your API work is fast and predictable. sync can be fine.

If your work can queue. call vendors. or take time. async is usually the safer default. You do not keep HTTP open too long. Retries are easier. Concurrency control is easier. Polling gives better visibility.

The clean async shape is three endpoints.

POST /api/v1/jobs

GET /api/v1/requests/:requestId

GET /api/v1/requests/:requestId/result

Step 9. Build the queue

There is no separate queue system here. Your apiRequests table is the queue.

Every row has a status field. That field is the state machine.

queued → processing → completed

→ failed

Here is how the pieces fit together.

The HTTP endpoint receives the request. It authenticates the key. checks idempotency. inserts one row with status = "queued". Then it kicks the dispatcher and returns 202 immediately. The HTTP connection is done.

The dispatcher runs as a background action. It looks at the table and counts how many rows for this user are currently "processing". If that count is under the concurrency limit. it takes the oldest "queued" rows and flips them to "processing". Then for each promoted row it schedules the actual job.

This is the important part. Promoting and scheduling are two separate steps. First you claim the row by changing its status. Then you schedule the work. That way two dispatchers cannot pick up the same row.

The background job does the real work. When it finishes it sets the status to "completed" or "failed".

The polling endpoint just reads the row and returns its current status.

So the concurrency limit lives in the dispatcher. If the user already has 5 rows in "processing". nothing new gets promoted. The queued rows just wait until a slot opens.

Here is the HTTP endpoint.

// convex/http.ts

import { httpRouter } from "convex/server";

import { httpAction } from "./_generated/server";

import { internal } from "./_generated/api";

const http = httpRouter();

http.route({

path: "/api/v1/jobs",

method: "POST",

handler: httpAction(async (ctx, request) => {

const auth = await authenticateApiKeyRequest(ctx, request);

if (!auth) {

return new Response(

JSON.stringify({

error: { code: "invalid_api_key" },

}),

{ status: 401 },

);

}

const idempotencyKey = request.headers.get("Idempotency-Key")?.trim();

if (!idempotencyKey) {

return new Response(

JSON.stringify({

error: { code: "invalid_request" },

}),

{ status: 400 },

);

}

const body = await request.json();

const requestBodyHash = await hashRequestBody(body);

// this mutation inserts the row as "queued"

// and kicks the dispatcher

const result = await ctx.runMutation(

internal.publicApi.mutations.claimRequest,

{

keyId: auth.keyId,

secretHash: auth.secretHash,

idempotencyKey,

input: body.input,

requestBodyHash,

},

);

return new Response(JSON.stringify(result), {

status: result.kind === "accepted" ? 202 : 200,

headers: { "Content-Type": "application/json" },

});

}),

});

export default http;

Here is the dispatcher. It promotes queued rows and schedules the actual work.

// convex/publicApi/actions.ts

export const dispatchQueuedRequests = internalAction({

args: {

userId: v.id("users"),

},

handler: async (ctx, args) => {

// this mutation checks concurrency limits

// flips "queued" rows to "processing"

// and returns the jobs that were promoted

const jobs = await ctx.runMutation(

internal.publicApi.mutations.promoteQueuedRequests,

{ userId: args.userId },

);

// schedule the actual work for each promoted job

await Promise.all(

jobs.map((job) =>

ctx.scheduler.runAfter(0, internal.publicApi.actions.executeJob, job),

),

);

},

});

But where does the dispatcher get called. It gets called inside claimRequest. That is the mutation the HTTP endpoint runs. Here is a simplified version.

// convex/publicApi/mutations.ts

export const claimRequest = internalMutation({

args: {

keyId: v.string(),

secretHash: v.string(),

idempotencyKey: v.string(),

input: v.string(),

requestBodyHash: v.string(),

},

handler: async (ctx, args) => {

// authenticate the key

const authResult = await authenticateApiKey(ctx, {

keyId: args.keyId,

secretHash: args.secretHash,

});

if (authResult.kind !== "authenticated") {

return { kind: "unauthorized" };

}

// check idempotency. if this key was already used. return the existing request

const existing = await ctx.db

.query("apiRequests")

.withIndex("by_user_and_idempotency_key", (q) =>

q

.eq("userId", authResult.userId)

.eq("idempotencyKey", args.idempotencyKey),

)

.first();

const evaluation = evaluateExistingRequest({

existingRequest: existing,

requestBodyHash: args.requestBodyHash,

});

if (evaluation.kind === "reuse_existing") {

return {

kind: "already_exists",

requestId: evaluation.requestId,

status: evaluation.status,

};

}

if (evaluation.kind === "idempotency_conflict") {

return { kind: "conflict", requestId: evaluation.requestId };

}

// insert the new request as "queued"

const requestId = generateRequestId();

await ctx.db.insert("apiRequests", {

userId: authResult.userId,

apiKeyId: authResult.apiKeyId,

requestId,

idempotencyKey: args.idempotencyKey,

requestBodyHash: args.requestBodyHash,

input: args.input,

inputPreview: args.input.slice(0, 100),

status: "queued",

createdAt: Date.now(),

});

// kick the dispatcher as a background action

await ctx.scheduler.runAfter(

0,

internal.publicApi.actions.dispatchQueuedRequests,

{ userId: authResult.userId },

);

return { kind: "accepted", requestId };

},

});

The key line is ctx.scheduler.runAfter(0, ...). That schedules the dispatcher to run immediately as a background action. The HTTP response does not wait for it. It returns 202 right away.

Now here is promoteQueuedRequests. This is where the real queue logic lives.

// convex/publicApi/mutations.ts

export const promoteQueuedRequests = internalMutation({

args: {

userId: v.id("users"),

},

handler: async (ctx, args) => {

const maxConcurrent =

Number(process.env.API_MAX_CONCURRENT_REQUESTS_PER_USER) || 5;

// count how many requests are currently processing for this user

const processing = await ctx.db

.query("apiRequests")

.withIndex("by_user_and_status_and_created_at", (q) =>

q.eq("userId", args.userId).eq("status", "processing"),

)

.collect();

const availableSlots = maxConcurrent - processing.length;

if (availableSlots <= 0) {

// all slots full. nothing to promote.

return [];

}

// grab the oldest queued requests up to the number of available slots

const queued = await ctx.db

.query("apiRequests")

.withIndex("by_user_and_status_and_created_at", (q) =>

q.eq("userId", args.userId).eq("status", "queued"),

)

.take(availableSlots);

// flip each one to "processing"

const promoted = [];

for (const request of queued) {

await ctx.db.patch(request._id, {

status: "processing",

startedAt: Date.now(),

});

promoted.push({

requestId: request.requestId,

apiRequestId: request._id,

input: request.input,

});

}

// return the promoted jobs so the dispatcher can schedule them

return promoted;

},

});

This runs inside a mutation which means it is transactional. If two dispatchers run at the same time. only one wins. The other sees the rows already flipped to "processing" and finds no available slots. No double processing.

And here is the background job. This is where your actual work happens.

// convex/publicApi/actions.ts

export const executeJob = internalAction({

args: {

requestId: v.string(),

apiRequestId: v.id("apiRequests"),

input: v.string(),

},

handler: async (ctx, args) => {

try {

// do the actual work here

// call your AI provider. process the data. generate the file. whatever.

const result = await doYourActualWork(args.input);

// store the result if it is a file

const storageId = await ctx.storage.store(result.blob);

// mark the request as completed

await ctx.runMutation(internal.publicApi.mutations.markCompleted, {

apiRequestId: args.apiRequestId,

resultStorageId: storageId,

latencyMs: result.latencyMs,

});

} catch (error) {

// mark the request as failed

await ctx.runMutation(internal.publicApi.mutations.markFailed, {

apiRequestId: args.apiRequestId,

errorCode: "internal_error",

errorMessage: error instanceof Error ? error.message : "Unknown error",

});

}

// kick the dispatcher again

// this is important. when a job finishes it frees up a slot.

// so we check if there are queued requests waiting for that slot.

const request = await ctx.runQuery(internal.publicApi.queries.getRequest, {

apiRequestId: args.apiRequestId,

});

if (request) {

await ctx.scheduler.runAfter(

0,

internal.publicApi.actions.dispatchQueuedRequests,

{ userId: request.userId },

);

}

},

});

Notice the dispatcher gets kicked twice. Once when a new request arrives in claimRequest. And once when a job finishes in executeJob. That second kick is what drains the queue. When a slot opens. the next queued request gets promoted.

Step 10. Add concurrency limits

You do not want one account to flood your upstream providers.

A good first step is a per account processing limit.

API_MAX_CONCURRENT_REQUESTS_PER_USER=5

Match this to real provider behavior. If your upstream allows five concurrent requests. your app should not pretend it can safely run ten.

Step 11. Add polling

If you ship async. polling must be clear.

{

"request_id": "req_abc123",

"status": "processing",

"created_at": "2026-03-15T10:00:00.000Z",

"started_at": "2026-03-15T10:00:02.000Z",

"completed_at": null,

"result_available": false,

"result_url": null

}

const createResponse = await fetch("https://your-app.convex.site/api/v1/jobs", {

method: "POST",

headers: {

Authorization: `Bearer ${apiKey}`,

"Content-Type": "application/json",

"Idempotency-Key": crypto.randomUUID(),

},

body: JSON.stringify({

input: "your request payload here",

}),

});

const created = await createResponse.json();

let current = created;

while (current.status === "queued" || current.status === "processing") {

await new Promise((resolve) => setTimeout(resolve, 1000));

const statusResponse = await fetch(

`https://your-app.convex.site/api/v1/requests/${created.request_id}`,

{

headers: {

Authorization: `Bearer ${apiKey}`,

},

},

);

current = await statusResponse.json();

}

if (current.status === "completed" && current.result_url) {

const resultResponse = await fetch(current.result_url, {

headers: {

Authorization: `Bearer ${apiKey}`,

},

});

const result = await resultResponse.blob();

}

Step 12. Add revocation

Keys will leak. People will rotate secrets. Teams will leave.

Keep the row. Set revokedAt. Stop accepting the key. Keep history and analytics.

export const revokeApiKey = mutation({

args: {

apiKeyId: v.id("apiKeys"),

},

handler: async (ctx, args) => {

const identity = await ctx.auth.getUserIdentity();

if (!identity) {

throw new Error("Unauthorized");

}

const key = await ctx.db.get(args.apiKeyId);

if (!key) {

throw new Error("Key not found");

}

await ctx.db.patch(args.apiKeyId, {

revokedAt: Date.now(),

});

return { ok: true };

},

});

Step 13. Add prepaid usage or metering

You need a usage model. A simple launch model is prepaid usage. User buys a pack. Account gets balance. Successful requests consume one unit. Failed requests consume nothing. Queued and processing work can reserve capacity.

This works well because it is easy to explain. easy to show in the UI. easy to reason about in queue logic.

Step 14. Add retention

If you store generated output. decide how long it stays.

A simple rule is thirty days. Request metadata and status history remain forever. The output file expires. The result endpoint returns 410 after expiry. Analytics still work.

Step 15. Add logs and analytics

Log safe fields only. Request id. user id. key id. status. latency. error code.

Never log authorization headers. raw API keys. secret hashes. the pepper.

Useful analytics to track. total requests. success rate. response code breakdown. per key request count. how many are queued right now. how many are processing right now. These are useful for both your users and your own admin pages.

Things to think about before launch

Account scoped or key scoped access. Can one key read requests created by another key from the same account. Many products launch account scoped. That is simpler and it matches billing.

Idempotency. This is a must. Especially if requests cost money.

Sync or async. If your work is heavy or can queue. async is often the better product decision.

Real provider limits. Do not guess. Match your concurrency cap to your upstream reality.

One time reveal. Show the full key once. Never again.

Revocation. Make revoke fast and easy.

Clear error design. Document your errors well. Invalid key. invalid request. insufficient balance. idempotency conflict. request not ready. expired output. internal error.

Usage model. Prepaid. metered. subscription plus overage. Choose one and make it clear.

Retention. How long do results stay downloadable.

Logging and privacy. You need enough data to debug. But not enough to leak secrets.

The whole system in one view

Product layer. Account owns keys. User creates and revokes keys. User sees usage and docs.

Security layer. Server generates the secret. Server stores only hash and metadata. Pepper lives in server env vars.

Request layer. Auth with bearer token. Idempotency key for safe retries. One logical request row.

Execution layer. Queue. Dispatcher. Concurrency limit. Async processing.

Result layer. Poll status. Fetch result. Expire stored output later.

That is the whole system. Not just a random key table. A real API product.