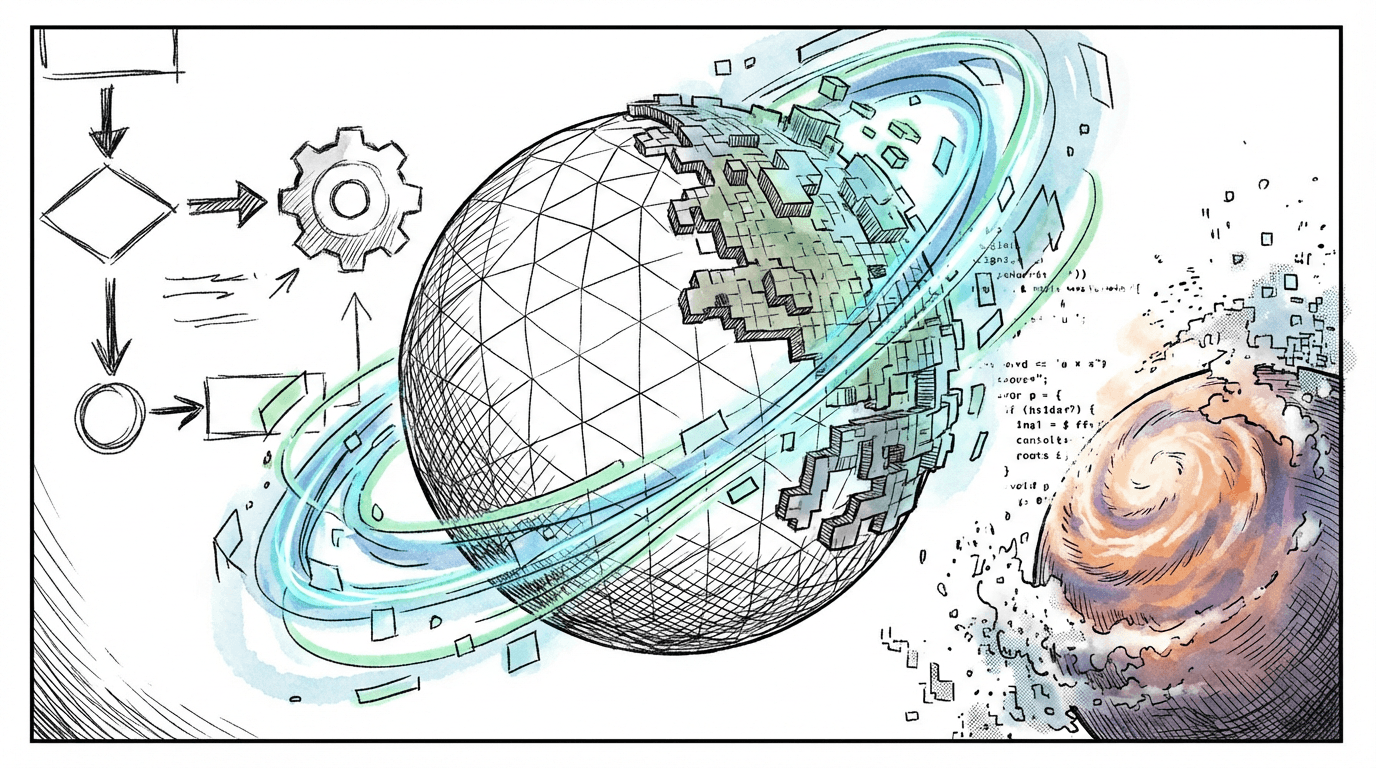

Building a Procedural Planet from Scratch: The Full Pipeline

Just a guy who loves to write code and watch anime.

Introduction

This is a walkthrough of every layer in a procedural 3D world generation system. Each section solves one problem. They build on each other in order. By the end you have a fully textured, threaded, planet-scale terrain with proper LOD, stitching, and depth precision.

1. Heightmap: The Starting Point

What: A flat grid of vertices where each vertex's height is set by sampling some height function.

Why: Every terrain system starts here. Before noise, before LOD, before planets, you need to turn a flat plane into bumpy ground.

How: Create a PlaneGeometry with a resolution (say 128x128 vertices). Loop through every vertex. For each one, sample a height function at its XZ position. Set the Y (or Z depending on orientation) to that value.

for (let v of plane.geometry.vertices) {

const [height, weight] = heightGenerator.Get(v.x + offset.x, v.y + offset.y);

v.z = height * weight;

}

plane.geometry.verticesNeedUpdate = true;

plane.geometry.computeVertexNormals();

The height function can be anything. An image (read pixel brightness as height), a math function, or noise. The code supports multiple height generators blended together by weight, so you can layer a heightmap image with procedural noise.

The world is split into chunks. Each chunk is one PlaneGeometry at some offset. This lets you load/unload terrain as the player moves.

2. Perlin/Simplex Noise

What: Replace the heightmap image with a procedural noise function that generates infinite, non-repeating terrain.

Why: An image heightmap has fixed resolution and fixed extent. Noise is infinite. You can sample it at any coordinate and get a consistent, deterministic value. The terrain goes on forever.

How: The noise class wraps either Perlin or Simplex noise with octaves. "Octaves" means you sample the noise multiple times at increasing frequencies and decreasing amplitudes, then add them together. This is called fractal Brownian motion (fBm).

Get(x, y) {

const xs = x / this._params.scale;

const ys = y / this._params.scale;

let amplitude = 1.0;

let frequency = 1.0;

let total = 0;

let normalization = 0;

for (let o = 0; o < this._params.octaves; o++) {

total += amplitude * this._noise.Get(xs * frequency, ys * frequency);

normalization += amplitude;

amplitude *= this._params.persistence;

frequency *= this._params.lacunarity;

}

total /= normalization;

return Math.pow(total, this._params.exponentiation) * this._params.height;

}

The key parameters:

scale: how zoomed in/out the noise is. Larger = smoother hills.

octaves: how many layers. More = more fine detail.

persistence: how much each octave's amplitude shrinks. Lower = smoother.

lacunarity: how much each octave's frequency increases. Higher = more detail per octave.

exponentiation: raise the final value to a power. Values > 1 flatten valleys and sharpen peaks. Makes terrain look more natural.

3. Quadtree and Level of Detail (LOD)

What: A quadtree that subdivides space near the camera into small chunks and keeps distant areas as large chunks.

Why: You cannot render the entire world at full resolution. A planet might need millions of chunks at max detail. LOD means: high detail near the camera, low detail far away. The quadtree decides where to split.

How: Start with one big node covering the whole terrain. Insert the camera position. If the camera is close enough to a node and the node is bigger than the minimum size, split it into four children. Recurse. Nodes near the camera get split many times (small, detailed). Nodes far away stay large (coarse).

_Insert(child, pos) {

const dist = child.center.distanceTo(pos);

if (dist < child.size.x && child.size.x > MIN_NODE_SIZE) {

child.children = this._CreateChildren(child);

for (let c of child.children) {

this._Insert(c, pos);

}

}

}

The leaf nodes of the quadtree become terrain chunks. Each leaf gets the same vertex resolution (say 64x64), but covers a different world-space area. A small leaf near the camera covers 500m at 64x64, giving fine detail. A large leaf far away covers 8000m at 64x64, giving coarse detail. Same vertex count, different density. That is LOD.

4. Planetary LOD: From Flat to Spherical

What: Wrap the flat quadtree terrain onto a sphere to make a planet.

Why: A flat terrain has edges. A planet does not. For a space game or planet-scale world, you need the terrain to wrap around a sphere.

How: Instead of one quadtree, use six. Each one maps to one face of a cube. Each face has a transform matrix that positions and rotates it to form the six sides of a cube. This is called a "cube sphere".

// 6 faces: +Y, -Y, +X, -X, +Z, -Z

for (let i = 0; i < 6; i++) {

sides.push({

transform: transforms[i],

quadtree: new QuadTree({

side: i,

size: radius,

localToWorld: transforms[i],

}),

});

}

When generating vertices, each vertex position starts as a point on the cube face, gets projected onto a sphere by normalizing it (making it unit length) and multiplying by the planet radius. Then height is added along the radial direction (outward from the planet center).

_P.set(xp - half, yp - half, radius);

_P.add(offset);

_P.normalize(); // Project onto unit sphere.

_D.copy(_P); // Save the radial direction.

_P.multiplyScalar(radius);

const height = generateHeight(worldPos);

_H.copy(_D);

_H.multiplyScalar(height);

_P.add(_H); // Push vertex outward by height.

The terrain is now spherical. The quadtree still works the same, it just operates on each cube face.

5. Texturing: Triplanar Mapping, Splatting, Blending

What: Apply multiple terrain textures (grass, rock, sand, snow) based on height, slope, and biome, without visible tiling.

Why: Vertex colors look flat. Real terrain needs texture detail. But naively mapping a texture onto terrain causes visible repetition (tiling). And you need different textures at different altitudes and slopes.

How: Three techniques combined.

Texture splatting: Each vertex stores weights for up to 4 textures. The CPU decides which textures based on height and surface angle (slope). Steep surfaces get rock. Flat high areas get snow. Low flat areas get grass. These weights are passed to the shader as vertex attributes.

Triplanar mapping: Instead of using UV coordinates (which stretch badly on steep surfaces), sample the texture three times, once for each axis: XY, XZ, YZ. Blend based on the surface normal direction. Steep walls use the XZ projection. Flat ground uses the XY projection. No stretching.

vec4 dx = texture(tex, pos.zy / scale); // X-facing

vec4 dy = texture(tex, pos.xz / scale); // Y-facing (top-down)

vec4 dz = texture(tex, pos.xy / scale); // Z-facing

vec3 weights = abs(normal.xyz);

weights /= (weights.x + weights.y + weights.z);

return dx * weights.x + dy * weights.y + dz * weights.z;

Texture bombing: To hide tiling repetition, the shader randomly offsets and blends the texture samples using a noise lookup. Two slightly offset samples of the same texture are blended with a smooth transition. The pattern never visibly repeats.

All three combine in the fragment shader: splat weights pick which textures, triplanar removes stretch, bombing hides tiling.

6. Atmospheric Scattering

What: A sky shader that simulates light scattering through the atmosphere, giving realistic sunsets, blue skies, and haze at the horizon.

Why: A skybox texture is static. If the sun moves, the sky does not respond. Atmospheric scattering computes sky color physically based on the sun angle.

How: The shader casts a ray from the camera through each pixel into the atmosphere (a shell around the planet). It marches along the ray, accumulating Rayleigh scattering (which makes the sky blue) and Mie scattering (which creates the bright glow around the sun). The result depends on: sun position, altitude, and the angle between the view direction and the sun.

This also adds distance fog naturally. Objects further away pick up more atmospheric haze. It makes the planet feel massive because distant terrain fades into the sky color instead of just clipping at the far plane.

7. Threading with Web Workers

What: Move all terrain mesh generation off the main thread into a pool of Web Workers.

Why: Generating terrain vertices involves thousands of noise samples, normal calculations, texture weight computations. This is heavy math. Doing it on the main thread freezes the game.

How: A WorkerPool manages 7 workers (one per spare CPU core). When a new chunk is needed, the terrain manager serializes the parameters into plain data (no class instances, workers cannot receive those) and enqueues it. The pool assigns it to the next free worker.

The worker reconstructs noise generators from the params, runs all the mesh generation math, packs the results into SharedArrayBuffer instances (zero-copy shared memory), and posts a "done" message. The main thread reads the shared buffers directly into Three.js geometry attributes. No copy, no stall.

// Worker side: pack into shared memory.

const posBuf = new Float32Array(new SharedArrayBuffer(4 * positions.length));

posBuf.set(positions);

self.postMessage({ positions: posBuf });

// Main thread: read directly.

chunk.geometry.setAttribute(

"position",

new THREE.Float32BufferAttribute(result.positions, 3),

);

The main thread never waits for workers. It renders what it has. When a chunk finishes in the background, it appears. Completely smooth.

8. Floating Origin

What: Periodically re-center the world around the camera so GPU coordinates stay near zero.

Why: GPUs use 32-bit floats. At large distances from the origin (100,000+ units), precision drops. Vertices jitter, triangles flicker (z-fighting), and the scene breaks visually.

How: Vertex positions are computed relative to the camera position, not in absolute world space. The noise function still samples at the true world coordinate (so terrain is consistent everywhere), but the actual mesh data the GPU receives is always near zero.

// Keep absolute position for noise sampling.

_W.copy(_P);

// Subtract camera position for GPU coordinates.

_P.sub(origin);

// Add height in world space direction.

const height = generateHeight(_W);

_P.add(heightVector);

This is visible in the Rebuild method where origin is the camera position passed in as a parameter. Every vertex subtracts it.

9. Logarithmic Depth Buffer

What: Replace the standard depth buffer encoding with a logarithmic one.

Why: The default depth buffer distributes most of its precision near the camera's near plane. For a planet-scale world where near=1 and far=1,000,000, almost all depth precision is in the first few meters. Everything beyond is z-fighting.

How: In the vertex shader, compute a logarithmic depth value:

vFragDepth = 1.0 + gl_Position.w;

In the fragment shader, write it out:

gl_FragDepth = log2(vFragDepth) * logDepthBufFC * 0.5;

Where logDepthBufFC = 2.0 / log2(farPlane + 1.0). This spreads depth precision evenly across the entire range on a logarithmic scale. Close objects still get fine precision, but distant objects also get usable precision instead of nearly zero.

The cost: writing gl_FragDepth in the fragment shader disables the GPU's early-Z optimization. Every fragment runs the shader even if it would be occluded. This is a performance tradeoff worth making at planetary scale.

10. Mesh Stitching: Gaps, Skirts, and Edge Matching

What: Fix the visible cracks that appear where terrain chunks of different LOD levels meet.

Why: A high-res chunk has 64 vertices along its edge. Its lower-res neighbour has 32. The vertices do not line up. You get cracks you can see through.

How: Three techniques.

Edge skirts: Each chunk generates one extra row of vertices on every side (resolution + 2 instead of resolution). These outer vertices are pushed to match their inner neighbour's position. They form a "flap" that tucks under the adjacent chunk. If there is a crack, the skirt geometry fills it.

Edge snapping: Each chunk knows the size ratio of its neighbours (stored in a neighbours array). If a neighbour is half the resolution, the high-res edge vertices are lerped to match the low-res grid. Vertex 1 between vertex 0 and vertex 2 gets interpolated to lie exactly on the line between them. Both chunks now agree on the same edge geometry.

if (neighbours[side] > 1) {

const stride = neighbours[side];

// For each edge vertex, find which two low-res vertices it falls between.

// Lerp its position to match.

}

Edge normal recomputation: Face-averaged normals at chunk borders are wrong because they only see faces on one side. The code recomputes edge normals using central differences: sample the height function at two nearby points, compute the cross product. This gives correct normals independent of chunk boundaries.

When a chunk's neighbour changes LOD level, only the edges need updating. That is what QuickRebuild does. It recomputes edge positions and normals without regenerating the entire mesh.

11. Biome Generation

What: Use a separate noise function to divide the world into biomes (desert, forest, tundra, etc.) that affect terrain color and texture selection.

Why: Without biomes, the entire planet uses the same height-to-texture mapping everywhere. With biomes, different regions of the world look distinct.

How: A second noise generator produces a biome value at each world position. This biome value is separate from the height noise. It uses different parameters (fewer octaves, larger scale) so biomes change gradually over large distances.

The texture splatter reads both the height and the biome value to decide which textures to apply. Low altitude in a desert biome gets sand. Low altitude in a temperate biome gets grass. The biome noise smoothly transitions between regions, and the texture blending handles the crossover.

const biomeGenerator = new Noise({

octaves: 2,

persistence: 0.5,

lacunarity: 2.0,

scale: 2048.0, // Very large scale. Biomes are big regions.

seed: 2, // Different seed from terrain noise.

});

// In the texture splatter:

const biome = biomeGenerator.Get(worldX, worldY);

// Use biome + height + slope to pick texture weights.

The biome noise is cheap (only 2 octaves) because it does not need fine detail. It just needs to vary smoothly across the planet.

How It All Fits Together

The full pipeline for rendering one frame:

Quadtree subdivides the six cube faces based on camera distance. Produces a set of leaf nodes (chunks to render).

Diff against the previous frame's chunks. New chunks get sent to the worker pool.

Each worker receives plain parameters, reconstructs noise generators, generates vertex positions on the sphere surface using noise + height.

Positions are offset by the camera origin (floating origin). Heights are sampled from the biome-aware texture splatter.

Edge stitching snaps borders to match lower-res neighbours. Skirts fill any remaining gaps.

Results are packed into SharedArrayBuffer and sent back zero-copy.

Main thread sets the geometry attributes and makes the chunk visible.

The terrain shader applies triplanar texturing with bombing, computes lighting, and writes logarithmic depth.

Atmospheric scattering renders the sky and distance haze.

Each part solves one specific problem. Together they produce a planet you can walk on, fly over, and zoom out from to see from space, all running smoothly in a browser.