Your game runs fine on desktop. Why does it crash on mobile?

Just a guy who loves to write code and watch anime.

Intro

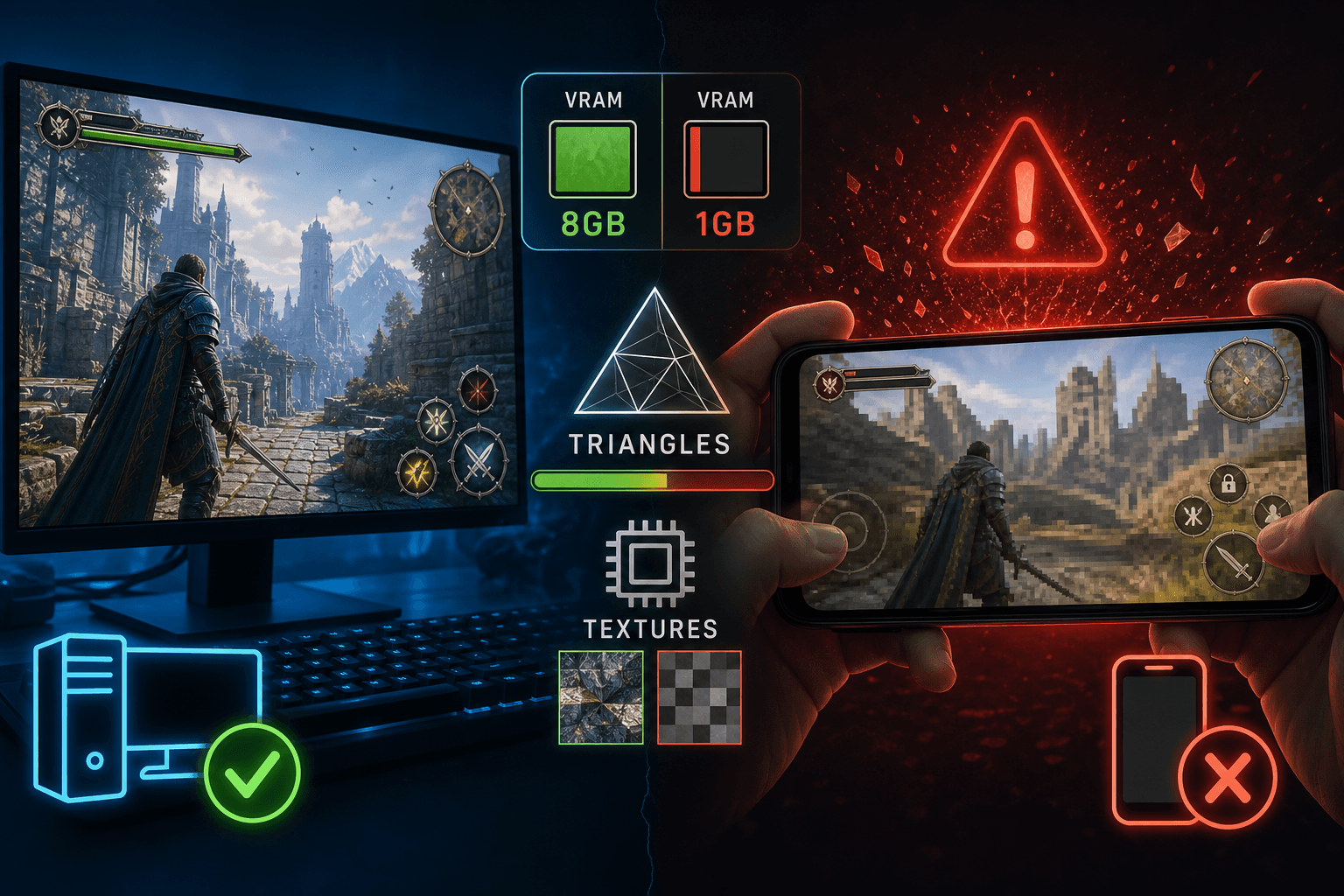

You built a game on the web. It runs at 60 fps on your laptop. You open it on your phone and it stutters, overheats, or just crashes. Same code, same scene, completely different result.

This post explains why. By the end you'll understand the actual budgets that matter (triangles, textures, VRAM, draw calls, fill rate), what each one costs, and why mobile is harder than desktop on every single one of them.

The pieces involved

Before we compare desktop vs mobile, quick definitions of the parts that matter.

CPU. The general purpose processor. Runs your JavaScript, your game logic, physics, AI. One thing at a time per core (mostly).

GPU. The graphics processor. Designed to do thousands of small things in parallel. Draws every triangle, runs every shader, fills every pixel.

RAM. The CPU's working memory. Where your code, variables, and game state live.

VRAM. The GPU's working memory. Where textures, meshes, and shaders live so the GPU can read them fast. On desktop, this is often a separate chip with its own dedicated memory. On mobile, it's the same physical RAM as the CPU's.

Bandwidth. How fast data can move between memory and the processor. Often more important than raw memory size, because if your processor can't get the data fast enough, it sits idle.

Triangles. Every 3D shape in a game is made of triangles. A character might be 20,000 triangles. A whole scene might be a few million.

Textures. Any image data the GPU reads. People usually picture textures as images wrapped around 3D models, but the term covers everything: sprites, particles, UI icons, fonts, normal maps, lookup tables, even the framebuffer itself. A 2048x2048 RGBA texture is about 16 MB uncompressed. Most scenes have hundreds of textures.

Draw calls. Each time the CPU tells the GPU "draw this thing," that's a draw call. Each one has fixed overhead, regardless of how big the thing is.

Fill rate. How fast the GPU can fill pixels with color. Limited by both the GPU's speed and the screen's resolution.

Why mobile is fundamentally different

The single biggest difference: memory architecture.

A gaming desktop has two separate memory pools. The CPU has its own RAM (16 GB or more). The GPU has its own dedicated VRAM (8 GB or more on a modern card, up to 24 GB on a flagship). They talk to each other over a fast bus called PCIe. Each processor reads from its own private memory at full speed.

A phone has one memory pool, shared between CPU and GPU. Your phone has, say, 6 to 12 GB total RAM depending on the model. The CPU and GPU both pull from the same pool. If your game eats 4 GB on textures, that's 4 GB the system, the OS, your game logic, and every other app on the phone don't get.

This is called unified memory (Apple's name) or shared memory architecture. It has some upsides (no copying data between CPU and GPU), but it has one massive downside: there's just way less of it for graphics.

A discrete GPU on a desktop might have 8 to 24 GB of VRAM. A flagship phone has maybe 2 to 5 GB practically available for graphics. Mid-range and older phones have less. The math is brutal.

What actually costs VRAM

When people say "I have a triangle budget," what they really mean is "I have a memory budget." Let's see where it goes.

Textures dominate. A single 2048x2048 RGBA texture uncompressed is 16 MB. AAA games often ship characters with 4 or 5 textures at 2K each (color, normal, roughness, metallic, ambient occlusion), which adds up to ~80 MB per character. A scene with 50 unique objects on that budget can hit 4 GB easily.

But that's a choice, not a requirement. Plenty of great-looking games ship at 1K or even 512px textures and lean on art direction, lighting, and good materials instead of resolution. Cutting your texture size in half saves 4x the memory. Cut it again and you've saved 16x. For mobile, this is one of the biggest levers you have.

Meshes are smaller but add up. A character mesh with 20,000 triangles is roughly 1 to 2 MB. A scene with hundreds of unique meshes might be 200 to 500 MB. Less than textures, still significant.

Shaders, framebuffers, depth buffers, shadow maps. Each of these takes VRAM too. A 4K shadow map is 64 MB. The depth buffer for a 1080p screen is 8 MB. Modern rendering uses many of these.

So the actual breakdown for a game:

60 to 80% textures

10 to 20% meshes

10 to 20% other (framebuffers, shadows, post-processing buffers, shaders)

Triangle count itself is rarely the memory bottleneck. Textures are. This is why "what's your triangle budget" is the wrong first question. The right first question is "what's your texture memory budget."

Use KTX2 (this is non-negotiable)

KTX2 is the latest format for GPU-compressed textures. It stores texture data in formats the GPU can read directly, like BC7 for desktops and ASTC or ETC2 for mobile. Importantly, the texture remains compressed in VRAM. The GPU decodes blocks as needed, without decompressing the entire image.

Without KTX2, your pipeline looks like this: PNG on disk → CPU decodes it to raw RGBA pixels → upload millions of uncompressed pixels to the GPU → eat the full VRAM cost. A 2048x2048 PNG is maybe 2 MB on disk but 16 MB in VRAM.

With KTX2 + BC7 (desktop) or ASTC (mobile): the same texture is roughly 4 MB on disk AND 4 MB in VRAM. Same image quality. 4x less memory. Less bandwidth. No CPU decoding work.

This is required for mobile. There is no path to good performance shipping raw PNGs on a phone. It's also a free win on desktop. Every modern engine and pipeline supports it. There's no reason not to use it.

Why triangle count still matters (just differently)

Triangle count doesn't usually break VRAM. It breaks the CPU and GPU's ability to process them in time.

Each triangle has to:

Be transformed by the vertex shader (CPU-side prep, GPU-side math).

Be tested for visibility (clipping, culling).

Be rasterized into pixels (GPU work).

Have each of its pixels shaded (more GPU work).

A modern desktop GPU can chew through 30 to 100 million triangles per frame at 60 fps. A flagship phone GPU does maybe 5 to 15 million per frame. Mid-range phones do 1 to 3 million.

So if your scene has 5 million triangles, desktop laughs. Phone struggles. Same data, different processing speed.

The reason is power. Phone GPUs are tuned to fit in a battery-powered handheld that can't dissipate heat. They're efficient per watt, but they have a fraction of the raw throughput of a desktop card that draws 300 watts and has fans on it.

Draw calls: the silent killer

Each draw call has fixed CPU overhead. Doesn't matter if you're drawing one triangle or one million. The setup cost is per-call, not per-triangle.

On a desktop, you can issue maybe 5,000 to 10,000 draw calls per frame at 60 fps. On mobile, more like 100 to 500. The CPU on a phone is slower AND graphics commands are typically issued from a single thread on the web, so each draw call costs more relative to the budget.

This is why mobile-targeted games aggressively use batching (combining many objects into one draw call) and instancing (drawing the same mesh many times in one call). It's not optional. If your scene has 2,000 individual draw calls and you target mobile, you're already over budget before you've drawn anything.

Fill rate and screen resolution

The GPU has to color every pixel on screen, every frame. More pixels = more work.

A 1080p screen is about 2 million pixels. A desktop 4K monitor is 8 million. An iPhone Pro is around 3 million. So far so similar.

But mobile screens have a sneaky factor: they often run at higher pixel densities, so even though the visible size is small, the GPU is filling almost as many pixels as a desktop, with a fraction of the GPU power.

And many games use multiple full-screen passes (post-processing, bloom, screen-space reflections). Each pass runs the fragment shader on every pixel again. If you have 5 full-screen passes at 3 million pixels per pass, that's 15 million fragment shader invocations per frame just for post-processing.

This is called being fill rate bound. The GPU isn't slow because of triangles, it's slow because it's filling too many pixels. Mobile hits this faster because:

Pixel density is high.

GPU fill rate is lower.

Heat throttles fill rate further the longer you play.

Heat and throttling: the hidden constraint

Desktop and console GPUs rely on power outlets and cooling systems. Mobile GPUs depend on batteries and passive cases.

In practice, a phone runs at full speed briefly, then heats up, causing the OS to reduce the GPU clock to prevent damage. This is thermal throttling.

A game running at 60 fps initially and dropping to 35 fps later isn't faulty; it's throttled. The hardware can achieve 60 fps but can't maintain it without overheating.

Mobile optimization is different. It's not about hitting 60 fps in a benchmark; it's about maintaining 60 fps for an hour without overheating. Sustained performance is more crucial than peak performance.

Why iPhones are weirdly good (and weirdly bad)

A quick note about iPhones. Apple's chips are the most powerful mobile GPUs available. The A17 Pro and M-series chips can compete with mid-range desktop GPUs in raw power. Modern Apple silicon includes hardware-accelerated ray tracing and 8 to 16 GB of shared memory. However, the same limits apply. The GPU shares memory with the CPU, and heat is still a problem. The screen has high pixel density, making the fill rate costly. Android devices vary widely. A top Samsung phone is similar to an iPhone, but an older, cheaper Android phone is much less powerful. When targeting "mobile," you're really aiming for "the weakest phone you'll accept," which is often much less capable than the iPhone you tested on.

What this means for you

If you're building a game on the web and want it to work on mobile, the rough budgets are:

| Resource | Desktop budget | Mobile budget |

|---|---|---|

| Triangles per frame | 5 to 30 million | 0.5 to 3 million |

| Texture memory | 2 to 6 GB | 200 to 800 MB |

| Draw calls per frame | 2,000 to 10,000 | 100 to 500 |

| Full-screen passes | 5 to 10 | 1 to 3 |

| Sustained GPU load | high | medium (heat) |

Same code running into a 10x smaller budget on every axis, while also having to share resources with the OS, the browser, and other apps.

When your desktop game crashes on mobile, the cause is almost always one of:

Texture memory blew the VRAM budget.

Draw call count is too high for the CPU.

Fill rate is saturated by post-processing on a high-DPI screen.

Thermal throttling kicked in and frame time spiked.

What actually helps

Concrete things that move the needle:

Ship textures as KTX2. Covered above. This alone can cut texture memory 4x to 8x. Required, not optional.

Drop texture resolution on mobile. A texture at 1024x1024 instead of 2048x2048 is 4x cheaper. You probably won't notice the visual difference on a phone screen. You could probably even go much lower on moible 512x512 or 256x256.

Batch and instance ruthlessly. 50 trees should be one draw call, not 50. Do the same on Desktop tbh wherever you can.

Render at lower internal resolution. Phone screens are dense. An iPhone Pro packs 9 physical pixels into every 1 CSS pixel (devicePixelRatio of 3). Render at native density and the GPU shades 9x more pixels than a regular screen of the same size. Cap the pixel ratio instead:

renderer.setPixelRatio(Math.min(window.devicePixelRatio, 1.5)). Fewer pixels rendered, browser stretches to fit, fragment shader does 4x less work. Image looks slightly softer on a small phone screen. Nobody notices. Framerate and heat get massively better.Frustum cull and occlusion cull aggressively. Don't draw what isn't visible. Backface culling when possible too.

LODs (level of detail). Draw simpler versions of meshes when they're far away. Have a thorough LOD system in place. You wanna aggressively do this on mobile. Octohedral impostors are great too for things medium-far away. For things very far away, they can just become flat images.