Why every sound in your game is the wrong volume

How to make sound effects good for your games.

Just a guy who loves to write code and watch anime.

Introduction

You add a gunshot from a free sound library. You generate an explosion with AI. You record a footstep with your phone. You drop them all into your game.

The gunshot blasts your ears. The footstep is barely there. The explosion sounds weirdly quiet.

So you do what anyone would do. You start dragging volume sliders around. Turn this one down. Turn that one up. Playtest. Still wrong. Drag more sliders. It is an endless guessing game that never feels right.

Maybe you search for a solution and someone tells you to normalize your audio files. Normalizing means scaling a sound file up or down so its loudest point hits a target level. If every file's loudest point is at the same level they should all sound equally loud. Right?

You try it. It still sounds wrong.

This post explains why. And the fix comes from broadcast science that TV and film figured out decades ago.

What "loud" actually means

To understand why normalizing failed we need to talk about what volume actually is in a sound file.

Sound in a computer is a long list of numbers. Each number is called a sample. It represents the intensity of the sound wave at one tiny moment in time. Bigger numbers mean louder sound. These numbers have a maximum. In digital audio the scale goes from -1.0 to +1.0.

We measure how close a sound gets to that maximum using a unit called dB. It stands for decibels. 0 dB means the signal is at maximum. -6 dB means it is half the power. -12 dB means half of that half. So a quarter of the power. Each -6 dB step cuts the power in half again. It is not a straight line like 100, 94, 88. It is more like 100, 50, 25, 12.5. Each step shrinks by the same ratio.

The peak of a sound file is simply the tallest sample. The single biggest number in that list. When you normalized your files you scaled them so every file's tallest sample hit the same level.

Here is why that does not work. A snare drum can have its tallest sample at maximum. 0 dB. But it sounds quiet. That spike lasts a fraction of a millisecond. Your brain barely registers it. A soft synth pad can have its tallest sample way below maximum. -6 dB. But it sounds louder because the sound is sustained. It fills the space over time.

Your brain does not care about the tallest spike. It measures the sustained energy of a sound. How much power it delivers over time. Not the single loudest moment.

Normalizing to peak lines up the spikes. But spikes are not what humans hear.

Your ears are not flat

There is another layer. Your ears do not treat all sounds equally across the pitch range.

Quick detour. Sound pitch is measured in Hz which stands for hertz. It means vibrations per second. A deep bass rumble might be 100 Hz. A normal speaking voice sits around 1000 Hz to 4000 Hz. A high pitched whistle might be 10000 Hz. kHz is just shorthand for thousands of hertz. So 1 kHz means 1000 Hz. 4 kHz means 4000 Hz.

Now here is the key fact. Humans are most sensitive to sounds in the 1 kHz to 4 kHz range. This is the speech range. Evolution tuned our ears to hear voices clearly. We are much less sensitive to deep bass and very high pitches.

This means a bass rumble and an alarm tone can carry the same energy but the alarm will sound much louder. Your ears literally amplify that mid-high range more.

So even measuring average energy is not enough. We need a measurement that accounts for how uneven human hearing is.

K-weighting. The filter that models your ears.

The solution is a filter called K-weighting. It shapes the audio to match human perception before measuring anything.

It is just two simple filters stacked together.

The first one makes higher pitched sounds (above roughly 1.5 kHz) louder by about 4 dB. Remember dB is just our unit for measuring volume. So this filter turns up the treble a bit. Why? Because your head and ear canal naturally do this to sound before it reaches your eardrum. The filter copies what your body already does.

The second one makes bass sounds (below about 100 Hz) quieter. Why? Because your ears are bad at hearing low bass. So the filter turns it down to match.

After these two filters the audio looks the way your brain actually hears it. Now when you measure the energy you get a number that matches perceived loudness.

LUFS. The broadcast standard your game should steal.

The broadcast industry took K-weighting and built a full measurement system around it. It is called LUFS. Loudness Units relative to Full Scale. The official spec is ITU-R BS.1770-4.

Every Netflix show uses this. Every Spotify track. Every YouTube video.

The algorithm is simple.

Step 1. Run your audio through the K-weighting filter.

Step 2. Chop the filtered audio into 400 millisecond blocks. Measure the energy of each block.

Step 3. Gate out silence. Blocks below a certain threshold get ignored. You do not want quiet pauses dragging your loudness number down. This happens in two passes. First an absolute gate removes dead silence. Then a relative gate removes quiet sections.

Step 4. Average the remaining blocks.

That is it. K-weight. Chop. Gate. Average. The result is a single number in LUFS that tells you how loud something actually feels to a human.

The invisible enemy. Intersample peaks.

There is a problem hiding in your audio that you cannot see.

Think of it like this. You have a dot at +0.9 and the next dot at -0.9. Both dots are below the maximum of 1.0. Looks fine.

But sound is not dots. It is a smooth wave. When your headphones or speakers turn those dots back into a smooth wave they have to draw a curve between them. And when two dots are far apart like +0.9 and -0.9 that curve swings wide. It overshoots past 1.0. Past the maximum.

Your hardware tries to play a sound louder than it can handle. It clips. You hear distortion. But if you looked at just the dots you would never know. Every dot was under the limit.

These hidden overshoots are called intersample peaks.

The fix is to not just look at the dots. Instead you calculate what the smooth curve between the dots would look like. You do this by inserting extra points between every pair of original dots. This is called oversampling. Now you can see the overshoots and measure the real peak. The unit for this is dBTP. Decibels true peak.

Putting it together for games

Here is how to set up your game audio using what broadcast science already figured out.

Foreground sounds like effects and voice lines should sit at around -14 LUFS. These need to cut through clearly.

Background sounds like music and ambient noise should sit at around -20 LUFS. They create atmosphere but should not tire the player's ears.

True peak ceiling should be -1.5 dBTP. You leave headroom so intersample peaks do not cause clipping.

These numbers are not arbitrary. -14 LUFS is close to what Spotify and YouTube normalize to. -1.5 dBTP comes from EBU R128 broadcast recommendations.

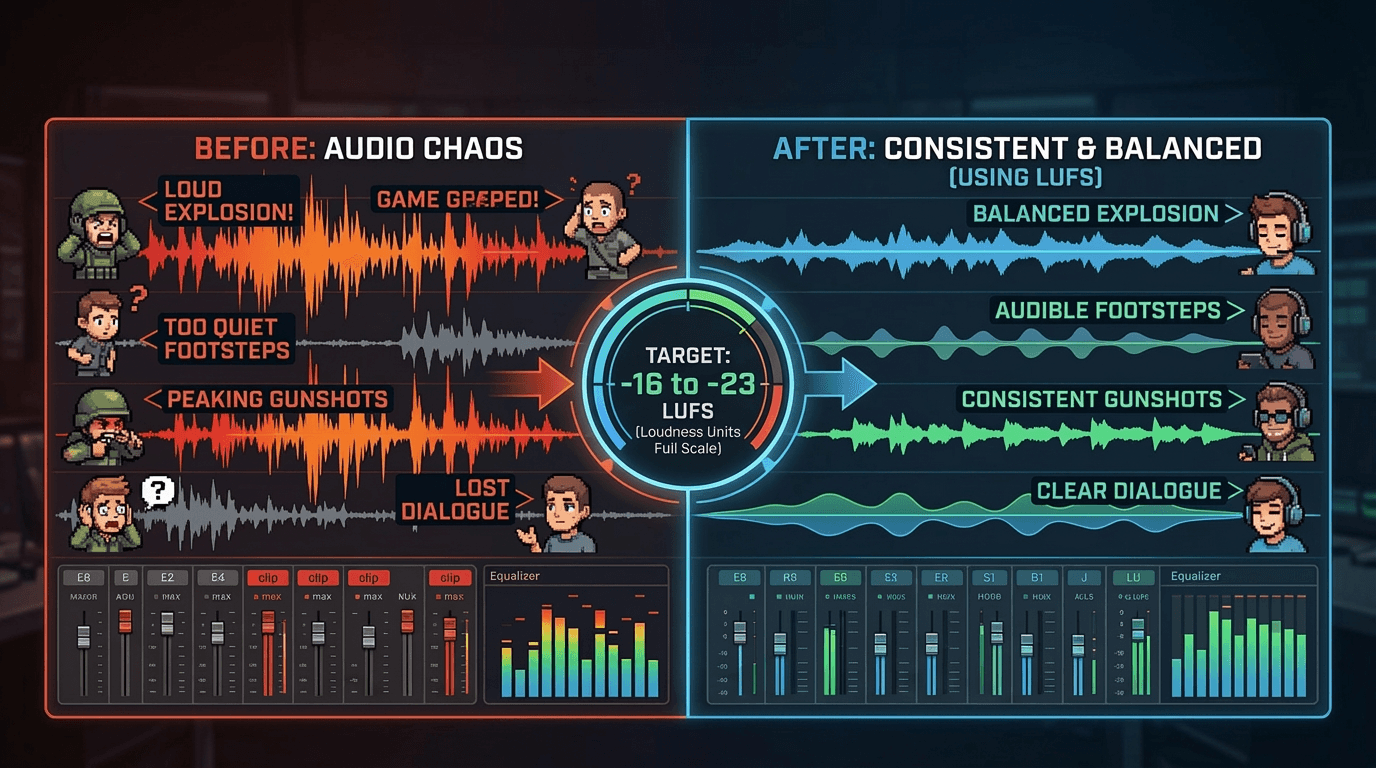

Before these targets your mix is chaotic. Some sounds blast. Some disappear. The player keeps adjusting their volume.

After these targets every sound sits in its right place. The player just plays the game and the audio feels right without thinking about it.