Redis is great

Just a guy who loves to write code and watch anime.

Introduction

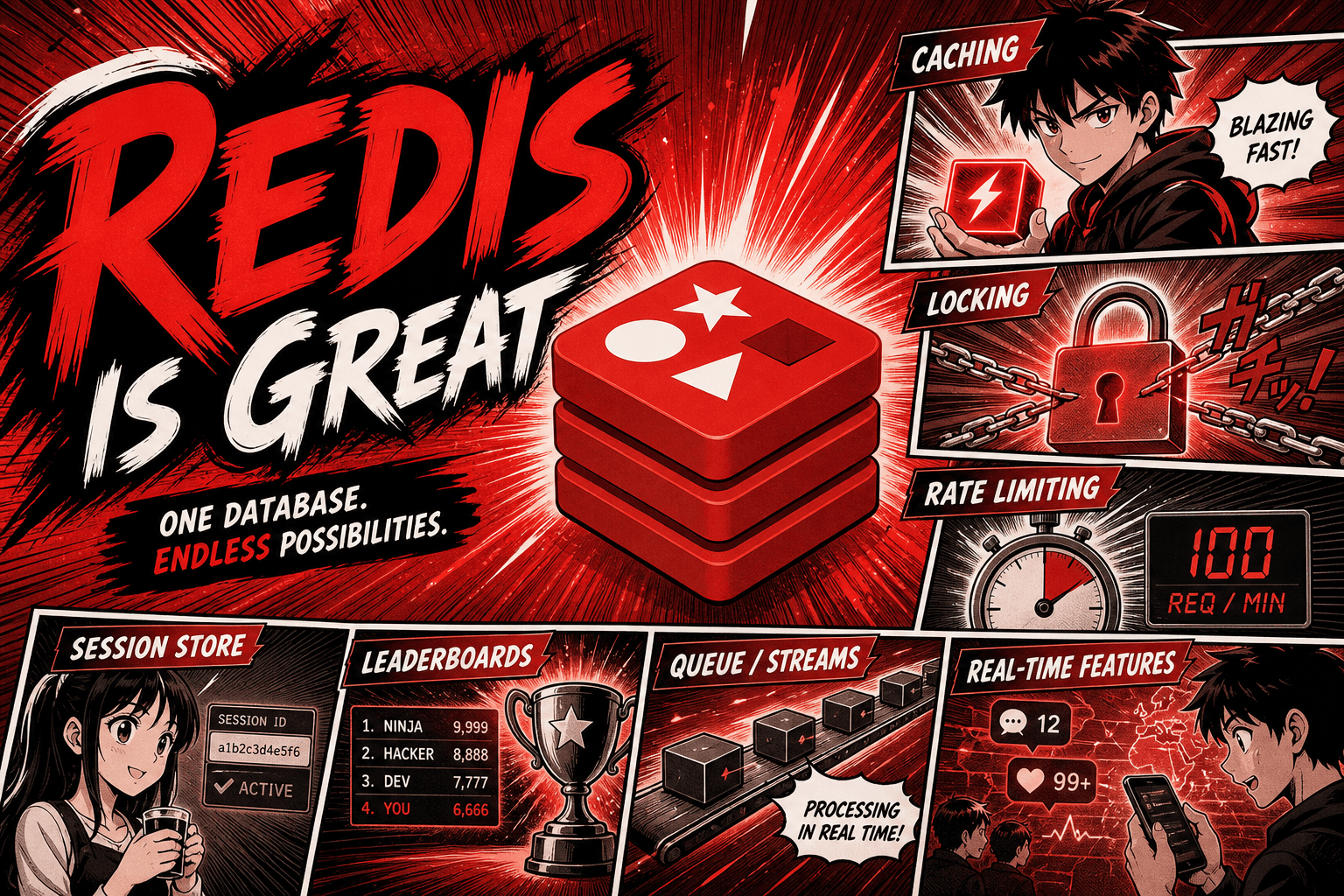

I've used Redis for a lot of things over the years. Rate limiting, caching, distributed locking, tracking peak concurrency, ephemeral counters, queues, deduplication, leaderboards. Every single time, it just worked.

This post is me appreciating Redis and explaining why it's so good at being a swiss army knife for backend systems.

What Redis actually is

Redis is an in-memory key-value store. That's the official description and it sells it short.

What it really is: a place to put data structures (strings, lists, sets, hashes, sorted sets, streams) and run atomic operations on them, fast, over a network.

Most people first encounter Redis as "a cache." It can be a cache. It's also way more than that.

Why it's fast

Redis stores everything in RAM. Reading from RAM is roughly 100,000x faster than reading from a spinning disk and roughly 1000x faster than an SSD. So Redis just doesn't pay the storage tax that databases pay.

Sub-millisecond response times for almost any operation. A typical Redis call from your application server takes well under a millisecond. You can call it many times per request and barely move your latency budget.

That's the foundation. Everything good about Redis builds on top of "RAM is fast."

Single-threaded, and why that's actually great

Here's the thing that surprises people. Redis runs on a single thread for command execution. One CPU core handles all your commands, in the order they arrive.

This sounds like a downside. It's not. It's the secret weapon.

Why it's good:

Every command is atomic by default. Because there's only one thread, no two commands can interleave. You don't need locks. You don't need transactions for most things. You just run a command and it either fully happened or didn't.

No race conditions inside Redis itself. Multi-threaded systems spend huge amounts of complexity dealing with concurrent writes to the same data. Redis sidesteps the whole problem by not being concurrent.

Predictable performance. No thread contention, no lock waits, no cache invalidation issues between cores. Just a tight loop processing commands one at a time.

Simple mental model. When you write code that uses Redis, you don't need to think about what happens if two requests hit the same key at once. The answer is always "one of them goes first, the other goes second, the result is deterministic."

The trade is throughput per server. Redis can't use 32 cores on a single instance. But it doesn't need to. A single Redis instance can do hundreds of thousands of operations per second on one core. For almost every workload, that's enough. If you outgrow it, you shard across multiple Redis instances.

Single-threaded isn't a limitation Redis works around. It's a deliberate design choice that simplifies everything.

What I've used Redis for

Caching

The classic use. Database query is slow? Cache the result in Redis with a TTL (time to live). Next request hits Redis instead of the database.

GET user:123:profile

→ if hit, return it

→ if miss, query DB, SET user:123:profile EX 300, return

Redis evicts the key automatically after 5 minutes. No cleanup code. Done.

Rate limiting

I want to limit a user to 100 requests per minute. Redis has INCR (increment) which is atomic. Combined with EXPIRE:

key = "ratelimit:user:123"

count = INCR key

if count == 1: EXPIRE key 60

if count > 100: reject

Three commands. The first time a user hits the endpoint, the counter starts and gets a 60-second TTL. Every subsequent request increments. After 60 seconds, the key disappears, the counter resets.

No race conditions. No "what if two requests hit at the exact same moment." Atomic INCR handles it.

Distributed locking

Multiple servers, all want to do the same job. Only one should do it at a time. Redis can be the gatekeeper:

SET lock:job_xyz "server-A" NX EX 30

NX = only set if the key doesn't already exist. EX 30 = expire in 30 seconds (in case the server crashes before releasing the lock).

If the SET succeeds, you got the lock. If it fails, someone else has it. The 30-second expiry means a dead server doesn't hold the lock forever.

This pattern is called "Redlock" when done across multiple Redis instances for higher reliability, but the simple version above works for most use cases.

Tracking peak concurrency

You want to know "how many users were online simultaneously at peak?" Redis sorted sets and counters make this trivial.

Use INCR when a user connects, DECR when they disconnect. Track the max value with another key:

current = INCR concurrent_users

peak = GET peak_users

if current > peak: SET peak_users current

The whole thing is atomic from your code's perspective. No "two users connected at the same time and we lost a count" bug.

Queues

Redis lists are LIFO/FIFO queues out of the box. LPUSH to add, BRPOP to read (blocking, so workers idle until something arrives).

producer: LPUSH jobs "send_email:user_42"

worker: BRPOP jobs 0 (blocks until something is in the queue)

This is the basis of many job queue systems (Celery, Sidekiq, BullMQ, etc.) before they grow into more complex setups. For simple workloads, a Redis list IS your queue.

Deduplication

Don't want to send the same notification twice? Use a Redis SET:

SADD sent_notifications notification_id

→ returns 1 if newly added, 0 if already there

If the result is 1, send the notification. If it's 0, skip it. Atomic. Simple.

Leaderboards

Sorted sets (ZSET) are the perfect data structure for leaderboards. Every entry has a score. Redis keeps them sorted.

ZADD leaderboard 9500 "player_42"

ZREVRANGE leaderboard 0 9 WITHSCORES (top 10)

ZREVRANK leaderboard "player_42" (their rank)

Top N, rank lookup, score updates, all O(log N). Building this with a SQL database is annoying. Redis makes it three commands.

The thing that ties it all together

Notice the pattern across all those examples. Each one is:

A specific data structure (string counter, set, list, sorted set)

An atomic operation on it

Often with a TTL so old data cleans itself up

That's the Redis playbook. Pick the right data structure for the problem. Use built-in atomic operations. Let TTL handle cleanup.

You almost never write complex code on top of Redis. The complexity is already inside Redis. Your code is glue.

Persistence (the part people argue about)

Redis is in-memory, but it can persist to disk. Two options:

RDB snapshots: periodic dump of the whole dataset to disk. Fast, but you can lose the last few minutes of data if Redis crashes.

AOF (append-only file): every write command is logged to disk. Slower, but you lose almost nothing on crash.

You can combine them. For most use cases (caching, rate limiting, locks) you don't really care if Redis loses data on crash, because the underlying truth is in your real database. Redis is a fast layer on top.

For use cases where Redis IS the source of truth (a session store, a leaderboard you don't want to rebuild), persistence matters more. I would still never use redis as a persistent store. It just gets messy.

When NOT to use Redis

Redis is great. It's not a database replacement.

Don't use it for primary data storage unless you understand the persistence tradeoffs. Memory is finite and disks are forever.

Don't store huge values. Redis values can be megabytes, but you'll regret it. Keep keys and values small.

Don't use it as a queue if you need delivery guarantees. Use a real message broker (RabbitMQ, Kafka, NATS) for that. Redis lists are great for fire-and-forget jobs but not for "this MUST get processed exactly once" workloads.

Summary

Redis is an in-memory key-value store with rich data structures.

It's fast because RAM is fast.

It's single-threaded, which is a feature: every command is atomic, simple to reason about, no race conditions inside Redis.

It's a swiss army knife: caching, rate limiting, locks, queues, leaderboards, deduplication, counters, all with the same primitives.

The pattern is always: pick a data structure, use built-in atomic ops, let TTL clean up.

Don't try to make it a database. Use it as a fast, ephemeral layer on top of one.