How spatial audio works in games

Just a guy who loves to write code and watch anime.

Introduction

A while ago I started wondering how games make sound feel like it's coming from a real place. Like in Counter-Strike when you can hear someone walking through a room two corridors over, or in Hellblade where voices feel like they're whispering right behind you. Turns out it's a whole layered system. Let me walk through it from the basics up.

The most basic version: stereo panning

The simplest "spatial audio" isn't really spatial at all. It just makes a sound louder in your left ear or your right ear depending on where the source is.

Sound is to the left of you → boost the left speaker, reduce the right. Brain interprets this as "sound is on my left."

That's it. Every game has done this since the 90s. It works, but it's crude. You can tell something is left or right, but not how far away, not whether it's behind you, not whether there's a wall between you and it.

Adding distance

Sounds get quieter the further away they are. In games, you typically use a falloff curve:

volume = 1 / distance²

Far sounds quiet, close sounds loud. Combined with stereo panning, you now have a basic 3D audio system. Sound to the left and far = quiet on the left speaker. Sound to the right and close = loud on the right speaker.

This is what most games shipped with for decades. It's "spatial" in a loose sense, but it's missing something huge.

The problem this misses

Stand in a bathroom and clap. Stand in a forest and clap. Stand in a cathedral and clap.

The clap itself is identical in all three. But what your ears hear is completely different. Why?

Because sound bounces. The clap leaves your hands, hits the walls, hits the ceiling, hits the floor, hits the walls again. Each bounce arrives at your ears at slightly different times, with slightly different volumes, and slightly different frequencies (because surfaces absorb high frequencies more than low ones).

Your brain processes all those echoes without you thinking about it. That's how you can tell you're in a small tile room versus an open forest, just from a single clap. Your brain is doing a kind of acoustic ray tracing automatically.

Games that just do "louder on the left" miss all of this. The result is sound that feels detached from the space. You hear an enemy somewhere to your right, but the audio doesn't tell you whether they're in the same room or down a corridor.

What ray traced audio actually does

The pitch: simulate sound the way real sound works. Treat sound waves like rays bouncing off walls.

For each sound source in the game:

Shoot many rays out from the source in all directions.

Each ray bounces off surfaces it hits, losing some energy with each bounce.

Some rays eventually reach the listener (you).

Each ray that arrives carries info: how much energy it has left, how long it took to arrive, how its frequency was changed by the surfaces it bounced off.

Mix all those arriving rays together to produce the final sound.

So if you're standing around a corner from a gunshot, no direct ray reaches you. But rays that bounced off the corridor walls do reach you, slightly delayed, slightly muffled. You hear the shot as a bouncy, indirect sound. That's the sound telling you "this is around the corner, not in front of you."

If you're in a cathedral, hundreds of rays bounce around the huge space and arrive at you over a long stretch of time. Long reverb tail. You can hear the size of the room.

If you're in a small tile bathroom, rays bounce quickly and aggressively because surfaces are hard and close. Short, sharp reverb.

Same source sound, wildly different results depending on the geometry around you. Just like real life.

Why "ray traced"

Same reason graphics ray tracing has the name. You're literally tracing the path of a ray of energy through 3D space, hit by hit. Same algorithm as graphics ray tracing, just for sound waves instead of light.

The difference is that sound is way more forgiving than light. Sound diffracts (bends around corners) more than light does, and our ears are way less precise than our eyes. So you don't need millions of rays per source. A few hundred is often enough.

How your ears localize sound

There's another piece I haven't mentioned yet. How does your brain know a sound is in front of you versus behind you, given that both ears hear it equally in both cases?

Your outer ear (the floppy bit, called the pinna) is shaped weirdly on purpose. It filters sound differently depending on which direction the sound came from. A sound from in front of you hits your ear ridges differently than a sound from behind. Your brain has learned, since you were a baby, to recognize these tiny frequency changes as "in front" versus "behind" versus "above" versus "below."

This is called the HRTF: head-related transfer function. It's basically a mathematical recording of how your specific head and ear shape filter sound from every direction.

Modern spatial audio runs incoming sound through an HRTF filter for each ray, simulating what the ray would sound like if it actually entered an ear shaped like a typical human ear. With headphones, this trick is shockingly effective. You can genuinely tell if a sound is above you, behind you, or in front of you, even though headphones only have two channels.

Without HRTF, ray traced audio still sounds cool, but you can't tell front from back. With HRTF layered on top, you get true 3D positioning.

Putting it all together

Modern spatial audio in games is layered:

Source sound (the gunshot, the footstep, the voice).

Ray traced propagation (how does this sound travel through the geometry to reach the listener?).

Per-ray frequency filtering (each surface absorbs different frequencies, so each bounce changes the sound's character).

HRTF (final filter that makes the sound feel directional in 3D when heard through headphones).

The game runs all four steps, in real time, for every sound source, every frame. Then mixes the results into what you hear.

The fanciness varies

Not every game does the full ray traced version. There's a spectrum:

Simple occlusion checks. Shoot one ray from the source to the listener. If it hits a wall, muffle the sound. Fast, cheap, way better than nothing.

Multi-bounce ray tracing. What I described above. Real reverb based on geometry.

Baked acoustic response. Run the expensive simulation offline, save the result, look it up at runtime. Basically free at runtime, but doesn't react to dynamic geometry (closing a door doesn't change the sound).

Full real-time simulation. What modern engines like Steam Audio and Microsoft's Project Acoustics aim for. Expensive but reacts to everything.

Different games make different tradeoffs. Same underlying idea: sound should respect the geometry it travels through.

Where you can hear this

Counter-Strike 2 uses ray traced audio for footsteps and gunfire. You can hear an enemy three rooms away through doorways with surprising accuracy.

Hellblade: Senua's Sacrifice is famous for its binaural audio. Worth playing with headphones just for the audio.

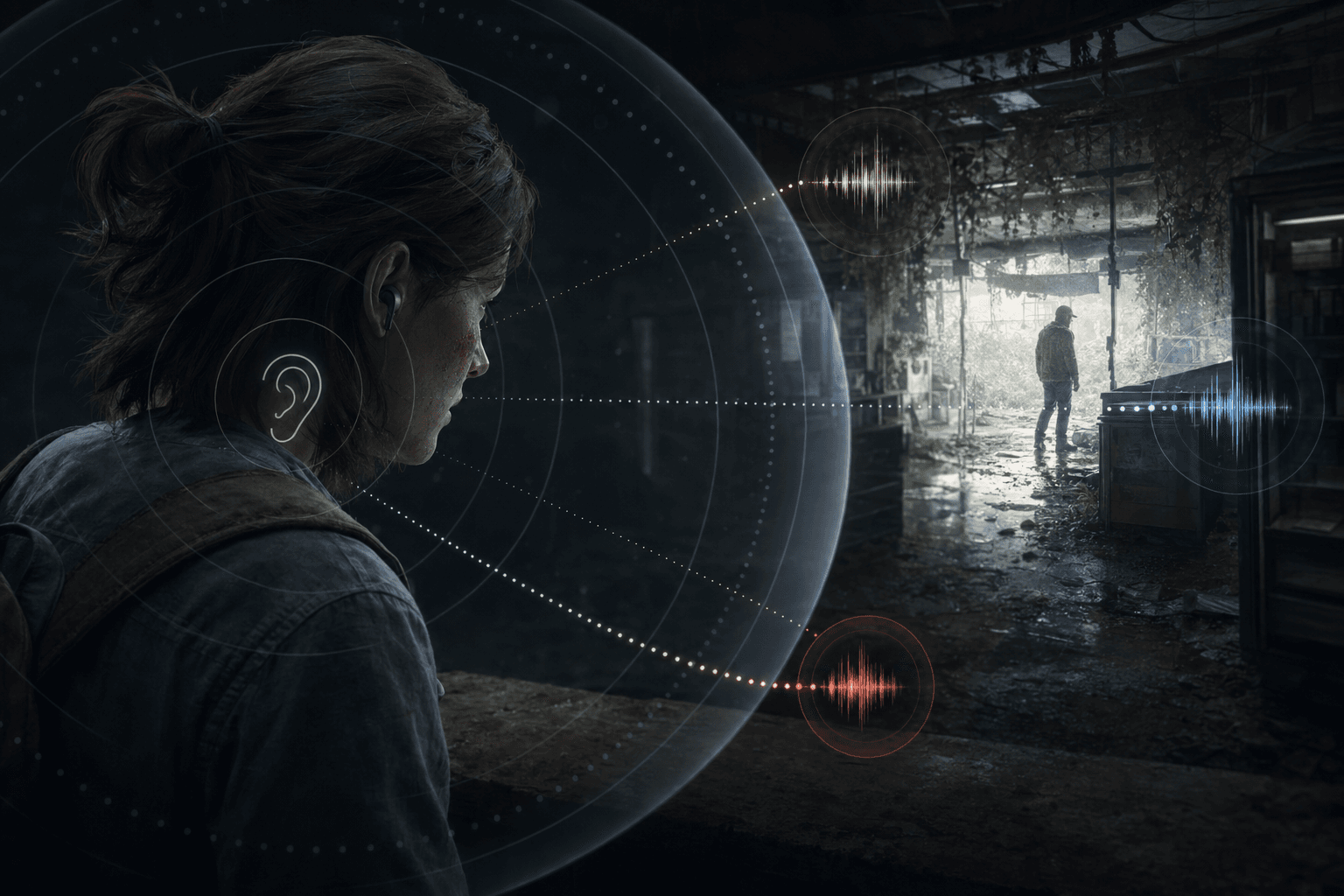

The Last of Us Part II uses occlusion and reverb extensively. Infected scuttling in the next room sounds like they're in the next room.

Hitman lets you eavesdrop on conversations through walls because the audio system muffles distant voices realistically.

Summary

Stereo panning: louder in one ear or the other. Crude.

Distance falloff: sounds get quieter further away. Combined with panning, gives basic 3D feel.

Ray traced audio: shoot rays from each sound source. Let them bounce off geometry. Mix the rays that reach the listener. Now sound respects walls, corridors, room sizes, surface materials.

HRTF: filter the result through a model of the human ear so your brain can tell front from back, up from down.

Why it's powerful: same source sound produces wildly different results in different spaces, just like in real life.