How Computers Draw 3D Worlds

Just a guy who loves to write code and watch anime.

Step 0. The Problem

A 3D scene inside a computer is just math. Points in space. Lines between them. Colors. Materials.

But your screen is flat. It is a grid of tiny squares called pixels. Each pixel can only show one color at a time.

So the big question is this: how do we turn a 3D scene (math) into a 2D image (pixels)?

That process is called rendering.

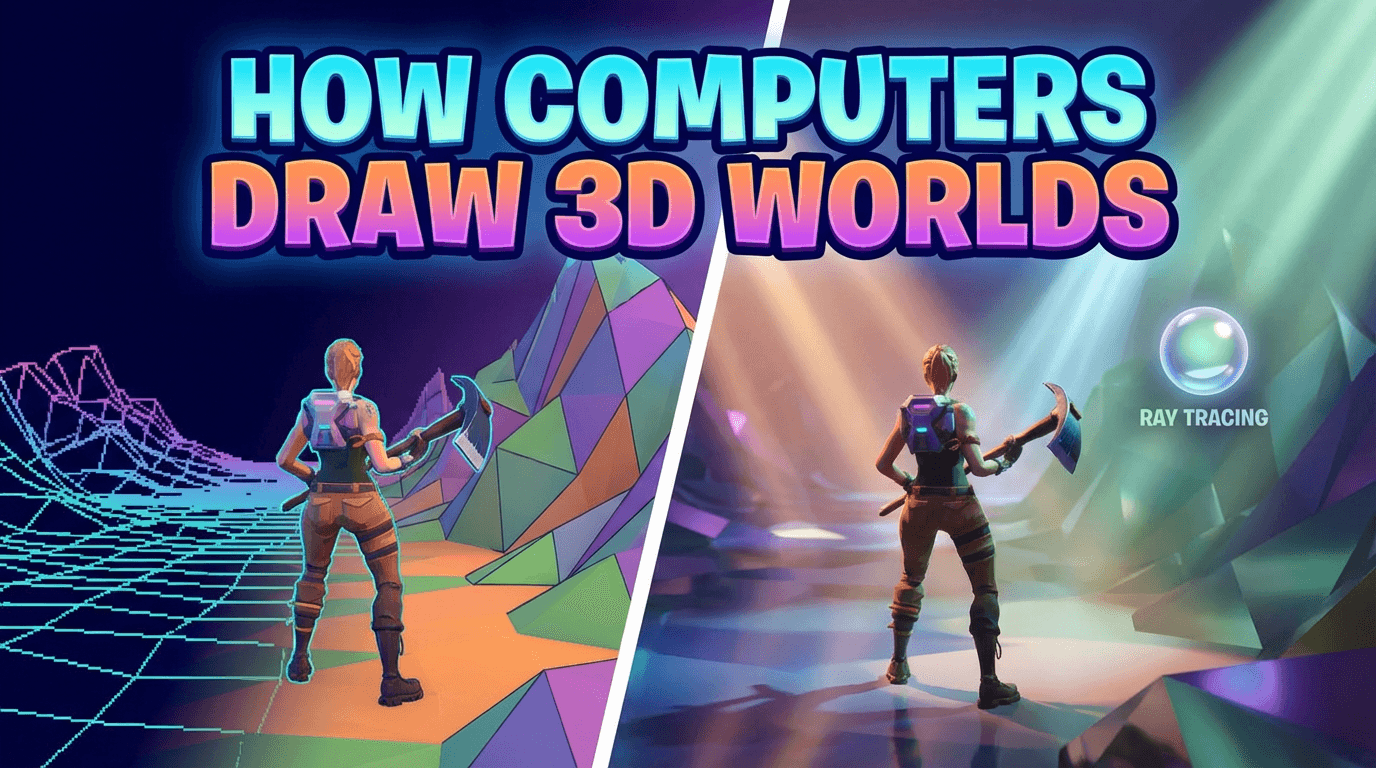

There are different ways to solve this problem. Each way is a trade between speed and realism.

1. Rasterization

This is the oldest and fastest method. Almost every video game you have ever played uses it.

The core idea

Take every 3D triangle in the scene. Figure out which pixels on the screen it covers. Color those pixels.

That is it. That is the whole trick.

3D models are built from triangles (thousands or millions of them). Rasterization goes through each triangle and asks: "which pixels does this triangle touch?" Then it fills those pixels in.

Why it is fast

The computer only looks at the geometry. It does not care about light bouncing around the room. It does not care about reflections. It just projects triangles onto the screen and fills pixels.

This is something graphics cards (GPUs) are extremely good at. They can do this for millions of triangles, sixty or more times per second.

The big problem

Rasterization knows where things are. But it does not know how light behaves.

If you want a shadow, the game has to fake it. If you want a reflection in a puddle, the game has to fake it. If you want soft light bouncing off a red wall onto a white floor (making the floor slightly pink), the game has to fake it.

Games have gotten very good at faking these things over the years. But they are still fakes.

2. Ray Tracing (the one you care about)

This one is interesting. It's also the one that took a moment for me to grasp.

Start with how real light works

In the real world, light comes out of a source (sun, lamp, fire). It travels in straight lines. It bounces off surfaces. Some of those bounces eventually hit your eyes. That is how you see things.

Every color you see is a photon (a tiny particle of light) that bounced around the world and ended up in your eye.

The naive idea

What if we simulated this inside the computer? Shoot millions of light rays from every lamp. Let them bounce around. Wait for some of them to hit a virtual "eye" (the camera).

This would work. It would look perfect.

But it would be insanely wasteful. Most light rays from a lamp never reach your eye. They go off into empty space or get absorbed. You would be simulating trillions of rays and almost none of them matter.

The clever flip

Here is the key insight that makes ray tracing actually work:

Do it backwards.

Instead of shooting rays from the lamp and hoping they hit the camera, shoot rays from the camera and see what they hit.

Why? Because we only care about light that reaches the eye anyway. So start from the eye. Go backwards. Trace the light ray in reverse until it finds a light source.

How a single ray actually works

Imagine you are the computer. You want to figure out the color of one pixel on the screen.

You shoot an imaginary ray from the camera, through that pixel, into the 3D scene.

The ray travels in a straight line until it hits something. Let's say it hits a shiny red apple.

Now you ask: "how much light is hitting this exact spot on the apple?"

To answer that, you shoot more rays from that spot. One ray toward each light source to check: "is this spot in shadow, or does light reach it?" If something is blocking the path to the lamp, this spot is in shadow. If not, light reaches it.

If the surface is shiny, you also shoot a ray in the reflection direction, to see what is being reflected.

If the surface is glass, you shoot a ray that bends through it (refraction).

Each of those new rays might hit another surface, which might also be shiny or glassy, so you shoot more rays from there. And so on.

After a few bounces, you stop. You collect all the information. You calculate the final color for that pixel.

Then you do this for every single pixel on the screen. Millions of them.

Why this looks so good

Because you are actually simulating how light behaves.

Shadows happen naturally. If a shadow ray gets blocked, the spot is in shadow. No fakery.

Reflections happen naturally. A reflection ray just bounces and shows you what is there.

Glass and water happen naturally. Rays bend through them.

Colored light bouncing off walls happens naturally. The ray picks up the color of what it touches.

Everything comes from the same simple rule: follow the ray and see what it hits.

Why it is slow

Every pixel needs at least one ray. But usually many more (for reflections, shadows, bounces). A 4K screen has 8 million pixels. You might need 10, 50, even hundreds of rays per pixel for a clean image.

That is a lot of rays. For a long time, ray tracing was only used for movies, where one frame could take hours to render. Real-time ray tracing (in games) only became possible recently, because GPUs got special hardware to accelerate it (starting around 2018 with Nvidia's RTX cards).

3. Path Tracing

Path tracing is ray tracing taken to its logical extreme.

The difference

Regular ray tracing usually handles a few specific effects: sharp reflections, sharp shadows, refraction. It still cheats on some things, like soft indirect light.

Path tracing says: no cheats. Simulate every possible light path.

When a ray hits a surface, path tracing does not just shoot one reflection ray. It shoots rays in many directions, because in reality light bounces off a rough surface in all directions, not just one. Then each of those rays bounces again. And again.

This captures something called global illumination: the way light bounces many times and fills a room with soft, indirect light.

The trade-off

Path tracing is even slower than basic ray tracing. Because you are shooting many rays per bounce, and tracking many bounces.

It also produces noisy images at first (grainy, like an old photo). You need a lot of rays per pixel to smooth it out. Modern games use AI denoisers to clean up the noise quickly.

But when it works, it is the most realistic rendering possible. It is what movies like Pixar's films use. And games like Cyberpunk 2077 and Alan Wake 2 now offer path tracing modes.

4. Lumen

Lumen is not a new rendering method. It is a system built by Epic Games for Unreal Engine 5.

What it does

Lumen tries to give you global illumination (that soft, bouncing, realistic light from path tracing) without the massive cost of full path tracing.

It is a clever hybrid. It uses a mix of tricks:

It uses simplified versions of the scene (lower detail) to trace rays quickly.

It uses screen-space tricks: looking at what is already on the screen to figure out reflections.

It uses ray tracing for things that need it.

It caches (saves) light calculations and reuses them across frames, so it does not redo work every frame.

Why it matters

Full path tracing is too expensive for most games on most hardware. Lumen gets you most of the visual benefit (bouncing light, soft shadows, dynamic reflections) at a fraction of the cost. It makes realistic lighting possible on normal gaming PCs and consoles.

It is less accurate than true path tracing. But it is fast enough to run in real time.