Data Locality: Why Your Data Layout Decides Your Game's Speed.

Just a guy who loves to write code and watch anime.

Introduction

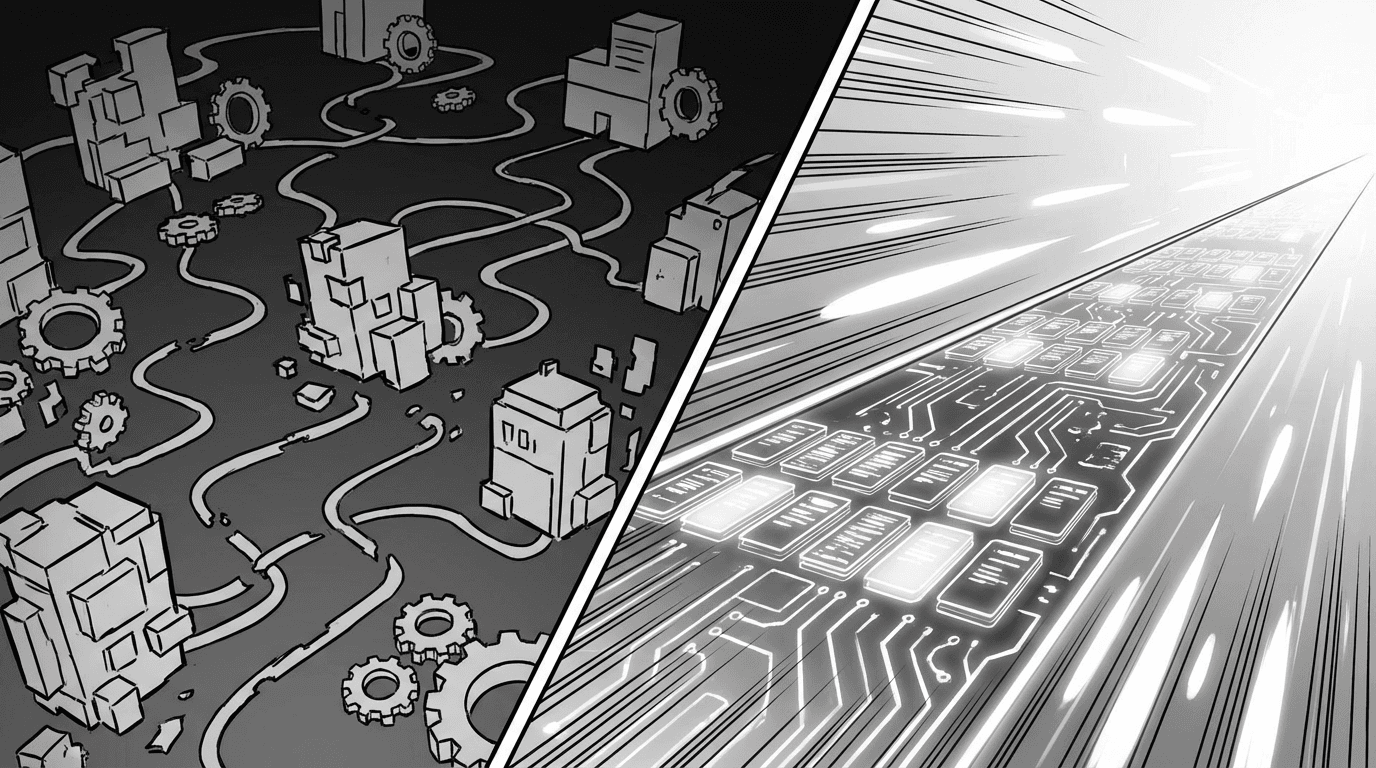

Most programmers think performance is about code. Better algorithms. Fewer loops. Less unnecessary work. That is all true. But there is another factor that can make your game 50x faster or slower. It has nothing to do with your logic. It is about where your data sits in memory.

The Problem.

CPUs are fast. Getting data from RAM is not. It can take hundreds of cycles to fetch a byte from main memory. Your CPU finishes its work in a few cycles, then sits idle waiting for data.

Caching.

Hardware engineers solved this by putting a tiny chunk of very fast memory right on the chip. This is the cache.

When the CPU needs one byte from RAM, it does not grab just that byte. It grabs a whole chunk of nearby memory, usually 64 to 128 bytes. This chunk is called a cache line.

The CPU is betting that the next thing you need is right next to the thing you just used. If it is, you get it almost instantly. Cache hit. If it is not, you wait hundreds of cycles. Cache miss.

The goal is to arrange your data so the CPU wins that bet as often as possible.

Keep Your Data Together.

If you process things in order and those things are next to each other in memory, the CPU loads them into the cache together. Every access is a hit.

If your data is scattered across memory connected by pointers, every access is a miss.

Same algorithm. Same number of operations. 50x difference in speed. The only variable is how the data is arranged.

The Classic Mistake.

Most game code following typical object oriented patterns does something like this.

Entity[] entities; // array of pointers to entities

Each entity has a pointer to an AI component. Each AI component is allocated separately on the heap. When you loop through and update AI, every iteration follows two pointers. One to the entity, one to the component. Each pointer is a likely cache miss. Most of the time is spent waiting for memory.

The Fix. Flat Contiguous Arrays.

Use an array of actual objects packed together, not an array of pointers to objects scattered everywhere.

// Before. Pointers everywhere.

const entities: Entity[] = [...];

for (let i = 0; i < count; i++) {

entities[i].getAI().update(); // two pointer chases per entity

}

// After. Packed together.

const aiComponents: AIComponent[] = new Array(MAX_ENTITIES);

for (let i = 0; i < count; i++) {

aiComponents[i].update(); // straight walk through memory

}

No pointers. The CPU streams through a straight line of data. Each cache line loads several components at once. By the time you finish one, the next ones are already cached.

This single change can make your update loop 50x faster.

Technique 1. Pack Active Objects Together.

Say you have a particle system. 100,000 particles, but only 5,000 are active at any time. If you loop through all of them and check an "isActive" flag, you waste cache loading dead particles just to skip them.

Fix. Keep active particles at the front of the array. Track how many are active with a counter.

class ParticleSystem {

particles: Particle[] = new Array(MAX_PARTICLES);

numActive = 0;

activate(index: number) {

const temp = this.particles[this.numActive];

this.particles[this.numActive] = this.particles[index];

this.particles[index] = temp;

this.numActive++;

}

deactivate(index: number) {

this.numActive--;

const temp = this.particles[this.numActive];

this.particles[this.numActive] = this.particles[index];

this.particles[index] = temp;

}

update() {

for (let i = 0; i < this.numActive; i++) {

this.particles[i].update();

}

}

}

Every byte the CPU loads is a particle you actually need to process. No gaps. No wasted cache lines. No branch prediction failures from checking flags.

You also do not need an "isActive" flag anymore. A particle is active if its index is less than numActive. That makes each particle smaller, so more fit per cache line.

Technique 2. Hot and Cold Splitting.

Not all data on an object is used at the same frequency. An enemy AI component has position, energy, animation state that get touched every frame. But it also has loot drop data that only matters once, when the enemy dies.

If all that data is packed together, the cache loads loot data every frame even though nothing touches it. That wastes space in the cache line and causes more misses.

Fix. Split into "hot" data you touch every frame and "cold" data you rarely need.

// Hot. Touched every frame.

class AIComponent {

animation: Animation;

energy: number;

goalPos: Vector;

lootData: LootDrop; // pointer to cold data

}

// Cold. Touched once in the object's lifetime.

class LootDrop {

dropType: string;

minDrops: number;

maxDrops: number;

chanceOfDrop: number;

}

The hot array stays small and tight. More components fit per cache line. The cold data only gets pulled into cache when you actually need it.

The Trade Off.

Traditional OOP makes this hard. Interfaces and virtual methods use pointers. Inheritance means objects can be different sizes, so you cannot pack them in a flat array. The way OOP encourages you to structure data is basically the opposite of what the cache wants.

This is one reason why ECS and data oriented design exist. They naturally organize data the way the hardware wants it. Flat arrays of components, processed by system, no pointer chasing. The cache friendly approach is not a sacrifice. It is just a better default.

The Rules.

Profile first. Only optimize code that is actually slow.

Make sure the slowness is from cache misses, not bad algorithms.

Use flat arrays of actual objects, not arrays of pointers.

Process objects in the order they sit in memory.

Pack active objects together. Do not skip over dead ones.

Separate hot data from cold data.

Minimize pointer chasing in hot loops.

One Sentence Summary.

Your CPU does not care how clever your code is. It cares how close together your data is.